White Coats and Iron Lungs

How Faith in Medical Experts Became a Pillar of the Fifth Branch (The Deep State)

Post Update

Thanks to Instapundit for the link.

If you’re coming over from Instapundit, welcome!

This piece is part of my ongoing Observations from the Late Republic series, where I explore how America today echoes the late Roman Republic — particularly the rise of a permanent expert/administrative class (what I call the Fifth Branch) and the tensions it creates with the citizenry.

If you enjoyed this one, you might also like:

The Republic’s Fourth Branch — America’s unique invention of an informed, armed, and sovereign citizenry

The Rise of the Fifth Branch — how the administrative state grew after WWII

The full series lives here: Observations from the Late Republic

Introduction

There is a particular kind of power that grows best in the presence of visible human suffering.

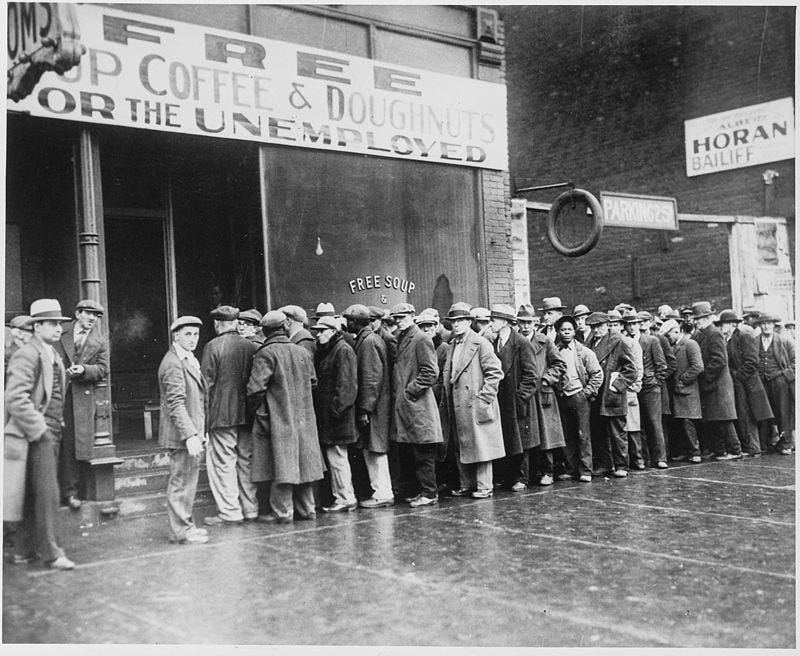

When people can see the pain — children trapped in iron lungs, families standing in bread lines, the elderly wasting away in loneliness — they become willing to grant extraordinary authority to anyone who promises relief. The more visible and heart-wrenching the suffering, the stronger the emotional permission slip.

This is the hidden engine behind one of the most durable pillars of the modern Fifth Branch: the moral authority of the expert class.

In the optimistic years of the Great Society, it became common to hear that poverty would be defeated the same way polio had been — find the right scientific solution, apply it aggressively through government action, and the problem would disappear.[1] That framing proved remarkably sticky. As a child in the 1970s, I still remember hearing echoes of it: the confident belief that experts in Washington could “solve” social problems with the same precision that medicine had solved disease.

Nowhere has this mechanism operated more powerfully — or more successfully for a time — than in medicine itself. For more than a century, the white coat carried a special kind of legitimacy built on genuine victories against terrifying, highly visible enemies: smallpox, polio, bacterial infections. Those successes were not abstract. You could see the empty iron lungs, the children walking again. That earned trust became cultural capital — slowly extended from treating acute disease to managing diet, lifestyle, risk, behavior, and eventually entire populations “for their own good.”

But medicine was not the only domain that learned this lesson.

The New Deal and especially the Great Society represented a parallel play in the realm of government charity. Here, too, the strategy was the same: make suffering visible, tug on the heartstrings, and position the expert administrative state as the compassionate, scientific solution. Poverty became “a problem we can solve the way we solved polio.”[1]

Deep Roots: From Plague to Prestige

The instinct to turn to “experts” during times of visible suffering is not a modern invention. It stretches back centuries, rooted in moments when ordinary people faced horrors they could not explain or control.

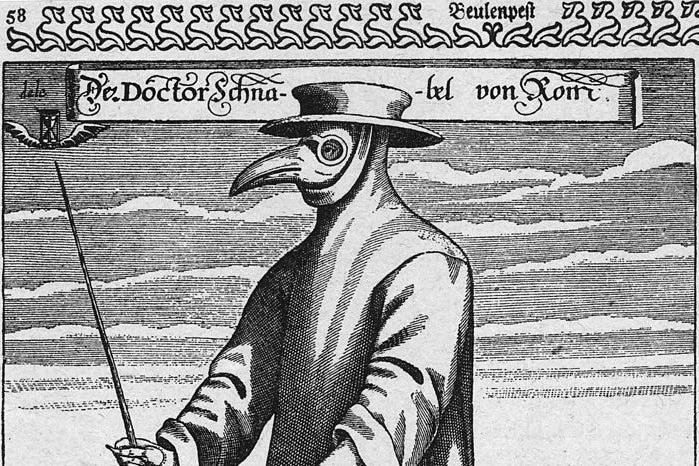

During the Black Death in the 14th century, Europe lost perhaps a third of its population in a few short years. Cities became open graves. Families abandoned the dying in the streets. The atmosphere was so grim that one can almost hear the macabre call from Monty Python and the Holy Grail: “Bring out your dead!”

In that atmosphere of terror, two very different responses emerged. Some turned to flagellants — wandering groups who whipped themselves in public penance, hoping God would lift the curse. Others looked to the small but growing class of university-trained physicians, men who claimed special knowledge of humors, astrology, and ancient texts.

The physicians rarely cured anyone. Their treatments (bloodletting, potions, and quarantine advice that was often little more than guesswork) were largely ineffective. Yet even then, a pattern began to form: when suffering was widespread and terrifying, people granted a special kind of deference to those who claimed learned, systematic knowledge — however limited that knowledge actually was.

The plague also triggered massive social and economic changes. Labor shortages drove up wages, weakened feudal structures, and shifted power toward the surviving commoners. Quarantines, rudimentary as they were, became one of the first widespread examples of government-imposed restrictions justified by public health. The trauma of the Black Death left a lasting imprint: fear of invisible killers made societies more willing to accept authority from those who claimed to understand them.

This tension between desperation and deference repeated itself across centuries. The Enlightenment brought new tools and greater confidence. The development of smallpox vaccination by Edward Jenner in the late 18th century was an early breakthrough — a clear, observable success that began to shift public trust toward empirical methods. By the mid-19th century, the work of Louis Pasteur, Robert Koch, and Joseph Lister delivered something even more powerful: germ theory and antisepsis. Diseases that had once been mysterious and inevitable suddenly had visible, microscopic causes and practical defenses.

These were not abstract laboratory wins. They translated into real, observable improvements in surgery survival rates, cleaner hospitals, and eventually mass vaccination campaigns. For the first time, “medical science” could point to concrete victories against ancient killers. The prestige of the white coat — or at least the trained physician — began its long ascent from respected craftsman to near-oracular authority.

By the early 20th century, this foundation was firmly in place. When crisis struck, societies increasingly turned not to priests, local leaders, or folk remedies alone, but to a professional class that claimed both technical competence and moral purpose.

The stage was set for the modern era, when visible suffering would meet organized government power and scientific optimism on a scale never before seen.

The 20th Century Acceleration

By the early 1900s, the foundation was already laid. Centuries of plague, enlightenment science, and germ theory had slowly elevated the prestige of the trained expert. But it was the 20th century — with its unprecedented scale of visible suffering and organized government power — that turned that prestige into a central pillar of the emerging Fifth Branch.

The Spanish Flu of 1918–1919 arrived at the worst possible moment. The world was already exhausted by the mechanized slaughter of World War I. Then came a virus that killed healthy young adults in days, often drowning them in their own fluid-filled lungs. Governments responded with quarantines, public health orders, mask mandates, and business closures — tools that felt familiar during wartime but now reached deep into civilian life. For many Americans, this was their first direct, personal encounter with centralized government authority justified in the name of public health.

The Flu acted as a powerful catalyst. It normalized the idea that experts and officials could — and should — restrict personal freedom during a crisis. It also helped elevate the standing of the emerging public health bureaucracy. When the crisis finally passed, much of that emergency infrastructure remained.

Then came polio.

If the Spanish Flu demonstrated the power of fear, polio demonstrated the power of hope. The disease struck children with terrifying visibility — iron lungs, leg braces, paralyzed limbs, and the haunting images of once-vibrant kids confined to wheelchairs or hospital wards. It was made even more personal because Franklin D. Roosevelt, the sitting President of the United States, was one of its most visible victims. Combined with the earlier eradication of smallpox — a disease so thoroughly defeated that people just a bit younger than me no longer carry the distinctive round vaccine scar on their upper arm — these victories felt like miracles of modern science. The March of Dimes turned the fight against polio into a national crusade. When Jonas Salk’s vaccine proved effective in 1955, the victory was celebrated as a triumph of American science and expertise. Polio wasn’t just defeated — it was solved by experts.

That success created something profound: deep, generational trust. For millions of parents, the white coat and the laboratory had literally saved their children. This earned credibility became cultural capital that extended far beyond acute infectious disease. It helped legitimize the growing belief that trained experts could systematically solve complex human problems if given enough authority and resources.

The New Deal and especially the Great Society took the same logic and applied it to economic and social suffering. Just as medicine had confronted disease, government would now confront poverty. Visible misery — bread lines in the 1930s, urban decay and hungry children in the 1960s — was met with the same emotional appeal: “We solved polio. We can solve this.”

Government positioned itself as the compassionate expert solution. Massive new bureaucracies were built — agencies, social workers, planners, and poverty programs — all claiming both technical competence and moral urgency. What began as targeted relief gradually expanded into a permanent administrative apparatus that claimed the right to manage large portions of American life “for the greater good.”

From Earned Trust to Cultural Capital

And Overreach

Success breeds confidence, and repeated success breeds something even more powerful: a halo effect.

By the middle of the 20th century, medical science had compiled an impressive string of victories. Smallpox had been driven to the brink of eradication. Polio, once a terror that paralyzed children and even a sitting president, was being pushed back by a vaccine. Antibiotics had turned once-deadly infections into routine problems. Each triumph was visible, measurable, and deeply emotional. Parents no longer feared their children would be crippled or disfigured by diseases that had haunted humanity for centuries.

That earned trust did not stay confined to the doctor’s office.

It became cultural capital — moral authority that could be spent in other domains. The same logic that justified aggressive public health measures during epidemics began to stretch into everyday life: diet, exercise, smoking, seatbelts, child-rearing practices, and eventually broader lifestyle regulation. The white coat, once a symbol of competence in treating acute illness, gradually became a symbol of authority over how people should live.

A parallel expansion occurred in the realm of government charity. The early successes of the New Deal in alleviating the worst miseries of the Depression, followed by the ambitious programs of the Great Society, created their own halo. Poverty programs, welfare expansions, public housing initiatives, and federal education efforts were sold with the same emotional appeal: “We solved polio. We can solve poverty.” Visible misery — hungry children, dilapidated inner-city neighborhoods, elderly Americans living in deprivation — was met with the promise that expert government intervention could deliver the same kind of decisive victory medicine had achieved.

What began as targeted relief for genuine hardship slowly morphed into something larger and more permanent: a vast administrative apparatus of planners, social workers, poverty experts, and bureaucrats who claimed not only technical competence but also moral superiority. The helping hand of government became institutionalized. Programs designed for temporary assistance became enduring entitlements. The language of compassion gradually blended with the language of control.

In both tracks — medicine and government charity — the same dangerous transition occurred:

Earned trust, rooted in real accomplishments against visible suffering, was quietly converted into assumed authority. What had once required continual demonstration of results became an almost religious faith in the expert class itself. “Trust the science” and “trust the program” became moral imperatives as much as practical ones.

This halo effect proved remarkably resilient. Even when results slowed or unintended consequences mounted, the cultural deference often remained.

The Fracture

Every pillar built on earned trust eventually faces a test: what happens when the results no longer match the promises — or when the promised solutions appear to make things worse?

The first major cracks appeared well before COVID-19.

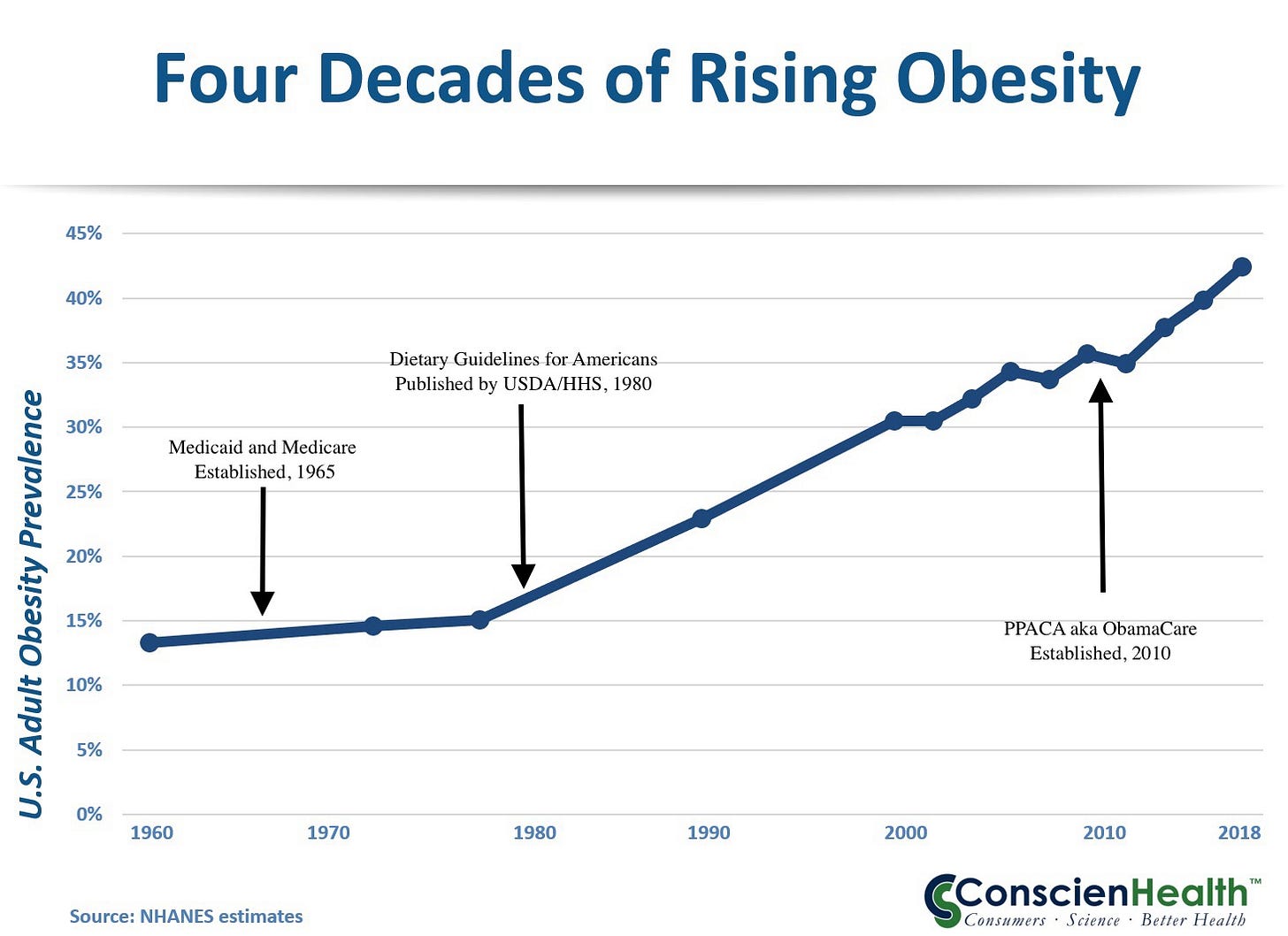

By the early 2010s, a growing body of research was showing that official dietary guidelines — emphasizing low-fat, high-carbohydrate eating for decades — had played a significant role in the explosion of childhood obesity and Type 2 diabetes. What was once hailed as responsible public health advice increasingly looked like a contributor to the very problems it claimed to solve. Rates of obesity and early-onset diabetes continued climbing even as Americans followed the low-fat recommendations. The halo around dietary experts began to dim.

Then came COVID-19, which accelerated the fracture dramatically.

What began as a genuine public health emergency quickly revealed the limits — and the overreach — of both the medical and government charity pillars working in tandem. Public health authorities, backed by government power, imposed sweeping restrictions on daily life: lockdowns, school closures, business shutdowns, mask mandates, and vaccine requirements. Much of it was justified with the same moral framing that had worked for decades: “We’re doing this to protect the vulnerable. Trust the experts.”

But this time, the halo cracked wide open.

Guidance changed repeatedly. Early assurances about masks, transmission, and natural immunity were walked back or contradicted. Dissenting scientists were censored or deplatformed. Economic and social costs — especially to children and working-class families — were often downplayed. The same institutions that had once delivered polio vaccines now appeared more focused on control and narrative management than on clear, consistent competence.

Here’s me in June 2020, proudly modeling my brand-new “Arnold Schwarzenegger” mask before heading out in public. Because nothing says “this will definitely stop an airborne virus” like a thin cloth mask with Arnold’s face on it.

At the same time, the government charity pillar faced its own reckoning. Decades of massive spending on anti-poverty programs had produced mixed results at best. Persistent poverty in some communities remained stubbornly resistant to top-down solutions. It began to appear that simply giving the money directly to the poor might have produced better outcomes than the vast bureaucratic apparatus built around them. The expert class increasingly responded to criticism not with better results, but with accusations of bad faith.

The public began to notice the pattern.

What had once felt like genuine compassion and competence increasingly looked like institutional self-preservation. The language of “public health” and “social justice” started to feel like rhetorical tools for expanding control rather than solving problems. Trust eroded, especially among those who bore the heaviest costs of the policies — and among parents watching childhood obesity and diabetes rates rise despite (or partly because of) decades of official dietary guidance.

This fracture is significant because these two pillars — medical expertise and government charity — had become two of the strongest sources of moral legitimacy for the Fifth Branch. When citizens begin to question whether the experts are still competent, or whether the compassion is still genuine, the entire edifice becomes brittle.

In Late Republic terms, this is dangerous territory. When large portions of the citizenry (the Fourth Branch) lose faith in the institutions that claimed both technical superiority and moral superiority, the system loses its most important stabilizing force: voluntary consent. What remains is raw power, polarization, and the growing temptation to use lawfare, censorship, or coercion to maintain control.

The Fifth Branch is discovering what previous elites eventually learned: authority built on visible suffering and promised solutions is powerful — but only as long as the solutions keep coming and the suffering appears to be addressed. When the halo slips, the backlash can be sudden and unforgiving.

The question now facing America is whether this loss of trust in two of the Fifth Branch’s most important pillars will lead to reform and renewal — or to further entrenchment and conflict.

The Republic has faced such tests before. And survived.

[1] Footnote:

This framing was common in mid-1960s policy discussions surrounding the War on Poverty. See Richard H. Leach, “The Federal Role in the War on Poverty Program,” Duke Law Journal (1966).

Further Reading

Deep Roots (Plague to Germ Theory)

Ken Follett, World Without End (sequel to Pillars of the Earth) – A highly readable novel that vividly captures the devastation of the Black Death, the rise of university-trained physicians, quarantines, and the massive social upheaval that followed. While fiction, it draws heavily on historical research.

Barbara Tuchman, A Distant Mirror: The Calamitous 14th Century – Excellent non-fiction account of the Black Death and its long-term effects on European society.

The 20th Century Acceleration (Spanish Flu, Polio, New Deal & Great Society)

John M. Barry, The Great Influenza: The Story of the Deadliest Pandemic in History – The definitive modern account of the 1918 Spanish Flu and its political/social consequences.

David M. Oshinsky, Polio: An American Story – Outstanding history of the polio epidemic, FDR’s role, the March of Dimes, and the Salk vaccine triumph.

Amity Shlaes, The Forgotten Man: A New History of the Great Depression and Great Society: A New History – Balanced looks at the New Deal and Great Society eras, including the expansion of government power and the expert class.

From Earned Trust to Cultural Capital & The Fracture

Nina Teicholz, The Big Fat Surprise – Deep dive into how dietary guidelines contributed to rising obesity and diabetes rates.

Ioannidis et al., various papers on COVID policy overreach and shifting guidance (especially on lockdowns, masks, and natural immunity).

Jonathan Haidt, The Coddling of the American Mind – Helpful context on how “safetyism” and institutional trust dynamics evolved in recent decades.

General / Late Republic Lens

Mike Duncan, The Storm Before the Storm – Excellent narrative history of the Late Roman Republic and the erosion of trust in institutions.

Edward Gibbon, The History of the Decline and Fall of the Roman Empire (selected chapters on the Gracchi through the early Empire) – Classic exploration of institutional decay.

Observations from the Late Republic

#Observations #FifthBranch #MedicalAuthority #GreatSociety #PublicHealth #LateRepublic

The worst part about all of this is that the confidence on 'we solved polio' is built on a catastrophe of poor decisions. We narrowed a multi-variate problem into a single cause, then claimed victory, while redefining the disease, and then took that hubris to the economy which is just as multi-variate.

More on the polio problem here: https://www.polymathicbeing.com/p/polio-pesticides-and-polymaths

Also, the 1918-1919 USA pandemic was over in three waves in 18 months with no antibiotics, no widespread usage of vaccines, no modern medical practices. With all three available in the 21st century we are still dealing with deaths and the consequences of public healths stupid choices 6 years after the start.